mirror of

https://github.com/openimsdk/open-im-server.git

synced 2026-04-28 22:39:18 +08:00

Compare commits

55 Commits

| Author | SHA1 | Date | |

|---|---|---|---|

| a42a44e0a3 | |||

| d8838ee6b8 | |||

| f480f52e2d | |||

| a23cbf13cf | |||

| 498e26a942 | |||

| e1990c179e | |||

| 01c3d4725b | |||

| bb4463349e | |||

| 6b55cfd0b8 | |||

| bb6462647a | |||

| 60f4f67fb7 | |||

| 4cd2713fd6 | |||

| 119e8dbb2f | |||

| c0194f6ef4 | |||

| 1c1322e3d2 | |||

| 4b192027aa | |||

| be5a3e5a3f | |||

| 5b697d5e95 | |||

| 0efc235f45 | |||

| 35bac04f58 | |||

| 4c7e0295bf | |||

| ceb669dfb8 | |||

| 02142c55b2 | |||

| 100926da0e | |||

| f935d36715 | |||

| 3cecbbc69a | |||

| e4046994cf | |||

| 403cfb6055 | |||

| 1f7dfa33d7 | |||

| a9153afc38 | |||

| 7a13284b2e | |||

| 75375adf62 | |||

| b17c6ec924 | |||

| fb74453c18 | |||

| 82d238afbe | |||

| 2c9a2239d8 | |||

| 56fd78653c | |||

| 872dcae27a | |||

| 6ba0d618e4 | |||

| a19f0e534f | |||

| eeb16d4116 | |||

| ae048417ee | |||

| 7502b4ac0f | |||

| 05ab3fcd06 | |||

| 7698368957 | |||

| 0d5fe4e6d6 | |||

| 2ac54e09a6 | |||

| 7153eeb178 | |||

| fd42c6dced | |||

| 69eb24f702 | |||

| 65c1c412da | |||

| 2496a16a88 | |||

| d1af343b13 | |||

| a580c15f6a | |||

| 8e0cb6dc47 |

@@ -1,79 +0,0 @@

|

||||

# Copyright © 2023 OpenIM. All rights reserved.

|

||||

#

|

||||

# Licensed under the Apache License, Version 2.0 (the "License");

|

||||

# you may not use this file except in compliance with the License.

|

||||

# You may obtain a copy of the License at

|

||||

#

|

||||

# http://www.apache.org/licenses/LICENSE-2.0

|

||||

#

|

||||

# Unless required by applicable law or agreed to in writing, software

|

||||

# distributed under the License is distributed on an "AS IS" BASIS,

|

||||

# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

|

||||

# See the License for the specific language governing permissions and

|

||||

# limitations under the License.

|

||||

|

||||

#---------------Infrastructure configuration---------------------#

|

||||

etcd:

|

||||

etcdSchema: openim #默认即可

|

||||

etcdAddr: [ 127.0.0.1:2379 ] #单机部署时,默认即可

|

||||

userName:

|

||||

password:

|

||||

secret: openIM123

|

||||

|

||||

mysql:

|

||||

dbMysqlDatabaseName: admin_chat # 数据库名字 默认即可

|

||||

|

||||

# 默认管理员账号

|

||||

admin:

|

||||

defaultAccount:

|

||||

account: [ "admin1", "admin2" ]

|

||||

defaultPassword: [ "password1", "password2" ]

|

||||

openIMUserID: [ "openIM123456", "openIMAdmin" ]

|

||||

faceURL: [ "", "" ]

|

||||

nickname: [ "admin1", "admin2" ]

|

||||

level: [ 1, 100 ]

|

||||

|

||||

|

||||

adminapi:

|

||||

openImAdminApiPort: [ 10009 ] #管理后台api服务端口,默认即可,需要开放此端口或做nginx转发

|

||||

listenIP: 0.0.0.0

|

||||

|

||||

chatapi:

|

||||

openImChatApiPort: [ 10008 ] #登录注册,默认即可,需要开放此端口或做nginx转发

|

||||

listenIP: 0.0.0.0

|

||||

|

||||

rpcport: # rpc服务端口 默认即可

|

||||

openImAdminPort: [ 30200 ]

|

||||

openImChatPort: [ 30300 ]

|

||||

|

||||

|

||||

rpcregistername: #rpc注册服务名,默认即可

|

||||

openImChatName: Chat

|

||||

openImAdminCMSName: Admin

|

||||

|

||||

chat:

|

||||

codeTTL: 300 #短信验证码有效时间(秒)

|

||||

superVerificationCode: 666666 # 超级验证码

|

||||

alismsverify: #阿里云短信配置,在阿里云申请成功后修改以下四项

|

||||

accessKeyId:

|

||||

accessKeySecret:

|

||||

signName:

|

||||

verificationCodeTemplateCode:

|

||||

|

||||

|

||||

oss:

|

||||

tempDir: enterprise-temp # 临时密钥上传的目录

|

||||

dataDir: enterprise-data # 最终存放目录

|

||||

aliyun:

|

||||

endpoint: https://oss-cn-chengdu.aliyuncs.com

|

||||

accessKeyID: ""

|

||||

accessKeySecret: ""

|

||||

bucket: ""

|

||||

tencent:

|

||||

BucketURL: ""

|

||||

serviceURL: https://cos.COS_REGION.myqcloud.com

|

||||

secretID: ""

|

||||

secretKey: ""

|

||||

sessionToken: ""

|

||||

bucket: ""

|

||||

use: "minio"

|

||||

@@ -1,27 +0,0 @@

|

||||

# Copyright © 2023 OpenIM. All rights reserved.

|

||||

#

|

||||

# Licensed under the Apache License, Version 2.0 (the "License");

|

||||

# you may not use this file except in compliance with the License.

|

||||

# You may obtain a copy of the License at

|

||||

#

|

||||

# http://www.apache.org/licenses/LICENSE-2.0

|

||||

#

|

||||

# Unless required by applicable law or agreed to in writing, software

|

||||

# distributed under the License is distributed on an "AS IS" BASIS,

|

||||

# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

|

||||

# See the License for the specific language governing permissions and

|

||||

# limitations under the License.

|

||||

|

||||

#more datasource-compose.yaml

|

||||

apiVersion: 1

|

||||

|

||||

datasources:

|

||||

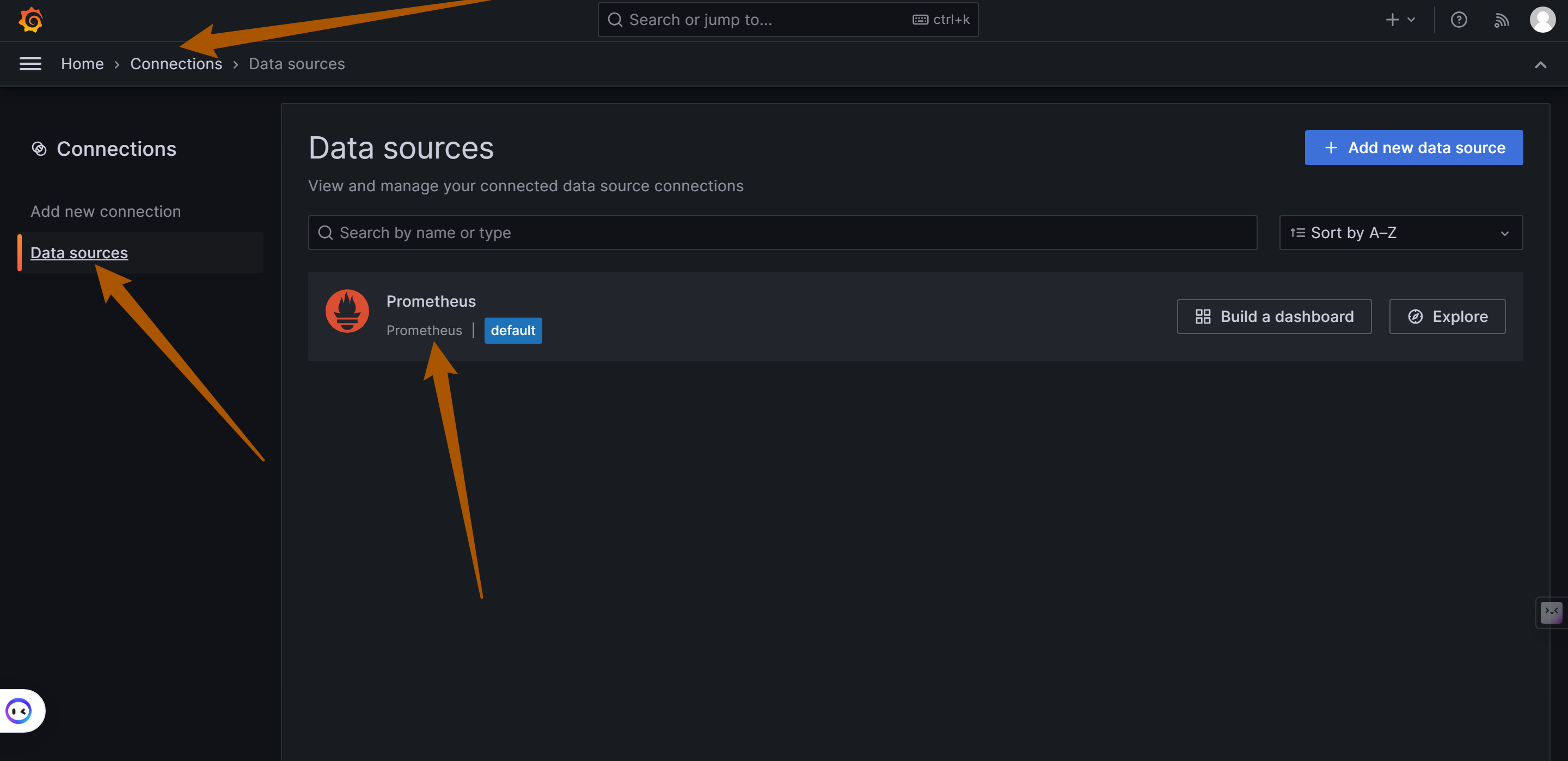

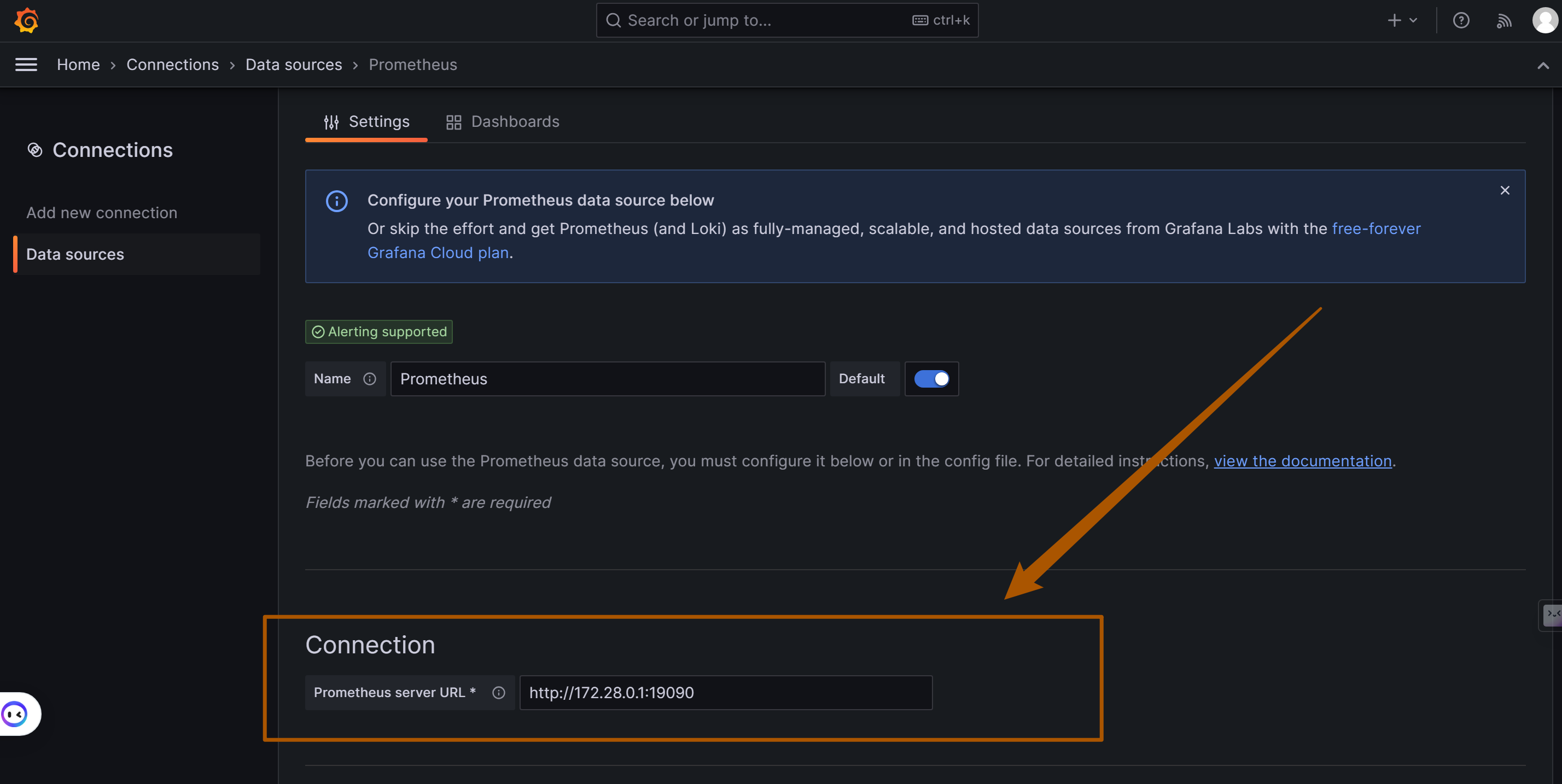

- name: Prometheus

|

||||

type: prometheus

|

||||

access: proxy

|

||||

orgId: 1

|

||||

url: http://127.0.0.1:9091

|

||||

basicAuth: false

|

||||

isDefault: true

|

||||

version: 1

|

||||

editable: true

|

||||

Binary file not shown.

File diff suppressed because it is too large

Load Diff

File diff suppressed because it is too large

Load Diff

@@ -1,85 +0,0 @@

|

||||

# Copyright © 2023 OpenIM. All rights reserved.

|

||||

#

|

||||

# Licensed under the Apache License, Version 2.0 (the "License");

|

||||

# you may not use this file except in compliance with the License.

|

||||

# You may obtain a copy of the License at

|

||||

#

|

||||

# http://www.apache.org/licenses/LICENSE-2.0

|

||||

#

|

||||

# Unless required by applicable law or agreed to in writing, software

|

||||

# distributed under the License is distributed on an "AS IS" BASIS,

|

||||

# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

|

||||

# See the License for the specific language governing permissions and

|

||||

# limitations under the License.

|

||||

|

||||

#more prometheus-compose.yml

|

||||

global:

|

||||

scrape_interval: 15s

|

||||

evaluation_interval: 15s

|

||||

external_labels:

|

||||

monitor: 'openIM-monitor'

|

||||

|

||||

scrape_configs:

|

||||

- job_name: 'prometheus'

|

||||

static_configs:

|

||||

- targets: ['localhost:9091']

|

||||

|

||||

- job_name: 'openIM-server'

|

||||

metrics_path: /metrics

|

||||

static_configs:

|

||||

- targets: ['localhost:10002']

|

||||

labels:

|

||||

group: 'api'

|

||||

|

||||

- targets: ['localhost:20110']

|

||||

labels:

|

||||

group: 'user'

|

||||

|

||||

- targets: ['localhost:20120']

|

||||

labels:

|

||||

group: 'friend'

|

||||

|

||||

- targets: ['localhost:20130']

|

||||

labels:

|

||||

group: 'message'

|

||||

|

||||

- targets: ['localhost:20140']

|

||||

labels:

|

||||

group: 'msg-gateway'

|

||||

|

||||

- targets: ['localhost:20150']

|

||||

labels:

|

||||

group: 'group'

|

||||

|

||||

- targets: ['localhost:20160']

|

||||

labels:

|

||||

group: 'auth'

|

||||

|

||||

- targets: ['localhost:20170']

|

||||

labels:

|

||||

group: 'push'

|

||||

|

||||

- targets: ['localhost:20120']

|

||||

labels:

|

||||

group: 'friend'

|

||||

|

||||

|

||||

- targets: ['localhost:20230']

|

||||

labels:

|

||||

group: 'conversation'

|

||||

|

||||

|

||||

- targets: ['localhost:21400', 'localhost:21401', 'localhost:21402', 'localhost:21403']

|

||||

labels:

|

||||

group: 'msg-transfer'

|

||||

|

||||

|

||||

|

||||

|

||||

|

||||

|

||||

- job_name: 'node'

|

||||

scrape_interval: 8s

|

||||

static_configs:

|

||||

- targets: ['localhost:9100']

|

||||

|

||||

@@ -20,7 +20,6 @@ CHANGELOG/

|

||||

|

||||

# Ignore deployment-related files

|

||||

docker-compose.yaml

|

||||

deployments/

|

||||

|

||||

# Ignore assets

|

||||

assets/

|

||||

|

||||

@@ -1,90 +0,0 @@

|

||||

# Copyright © 2023 OpenIM. All rights reserved.

|

||||

#

|

||||

# Licensed under the Apache License, Version 2.0 (the "License");

|

||||

# you may not use this file except in compliance with the License.

|

||||

# You may obtain a copy of the License at

|

||||

#

|

||||

# http://www.apache.org/licenses/LICENSE-2.0

|

||||

#

|

||||

# Unless required by applicable law or agreed to in writing, software

|

||||

# distributed under the License is distributed on an "AS IS" BASIS,

|

||||

# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

|

||||

# See the License for the specific language governing permissions and

|

||||

# limitations under the License.

|

||||

|

||||

name: OpenIM API TEST

|

||||

|

||||

on:

|

||||

push:

|

||||

branches:

|

||||

- main

|

||||

paths-ignore:

|

||||

- "docs/**"

|

||||

- "README.md"

|

||||

- "README_zh-CN.md"

|

||||

- "CONTRIBUTING.md"

|

||||

pull_request:

|

||||

branches:

|

||||

- main

|

||||

paths-ignore:

|

||||

- "README.md"

|

||||

- "README_zh-CN.md"

|

||||

- "CONTRIBUTING.md"

|

||||

- "docs/**"

|

||||

|

||||

env:

|

||||

GO_VERSION: "1.19"

|

||||

GOLANGCI_VERSION: "v1.50.1"

|

||||

|

||||

jobs:

|

||||

execute-linux-systemd-scripts:

|

||||

name: Execute OpenIM script on ${{ matrix.os }}

|

||||

runs-on: ${{ matrix.os }}

|

||||

environment:

|

||||

name: openim

|

||||

strategy:

|

||||

matrix:

|

||||

go_version: ["1.20"]

|

||||

os: ["ubuntu-latest"]

|

||||

steps:

|

||||

- name: Checkout code

|

||||

uses: actions/checkout@v4

|

||||

|

||||

- name: Set up Go ${{ matrix.go_version }}

|

||||

uses: actions/setup-go@v4

|

||||

with:

|

||||

go-version: ${{ matrix.go_version }}

|

||||

id: go

|

||||

|

||||

- name: Install Task

|

||||

uses: arduino/setup-task@v1

|

||||

with:

|

||||

version: '3.x' # If available, use the latest major version that's compatible

|

||||

repo-token: ${{ secrets.GITHUB_TOKEN }}

|

||||

|

||||

- name: Docker Operations

|

||||

run: |

|

||||

curl -o docker-compose.yml https://raw.githubusercontent.com/OpenIMSDK/openim-docker/main/example/basic-openim-server-dependency.yml

|

||||

sudo docker compose up -d

|

||||

sudo sleep 60

|

||||

|

||||

- name: Module Operations

|

||||

run: |

|

||||

sudo make tidy

|

||||

sudo make tools.verify.go-gitlint

|

||||

|

||||

- name: Build, Start, Check Services and Print Logs

|

||||

run: |

|

||||

sudo ./scripts/install/install.sh -i && \

|

||||

sudo ./scripts/install/install.sh -s && \

|

||||

(echo "An error occurred, printing logs:" && sudo cat ./_output/logs/* 2>/dev/null)

|

||||

|

||||

- name: Run Test

|

||||

run: |

|

||||

sudo make test-api && \

|

||||

(echo "An error occurred, printing logs:" && sudo cat ./_output/logs/* 2>/dev/null)

|

||||

|

||||

- name: Stop Services

|

||||

run: |

|

||||

sudo ./scripts/install/install.sh -u && \

|

||||

(echo "An error occurred, printing logs:" && sudo cat ./_output/logs/* 2>/dev/null)

|

||||

@@ -52,6 +52,7 @@ jobs:

|

||||

type=ref,event=branch

|

||||

type=ref,event=pr

|

||||

type=semver,pattern={{version}}

|

||||

type=semver,pattern=v{{version}}

|

||||

type=semver,pattern={{major}}.{{minor}}

|

||||

type=semver,pattern={{major}}

|

||||

type=sha

|

||||

@@ -87,6 +88,16 @@ jobs:

|

||||

uses: docker/metadata-action@v5.0.0

|

||||

with:

|

||||

images: registry.cn-hangzhou.aliyuncs.com/openimsdk/openim-server

|

||||

# generate Docker tags based on the following events/attributes

|

||||

tags: |

|

||||

type=schedule

|

||||

type=ref,event=branch

|

||||

type=ref,event=pr

|

||||

type=semver,pattern={{version}}

|

||||

type=semver,pattern=v{{version}}

|

||||

type=semver,pattern={{major}}.{{minor}}

|

||||

type=semver,pattern={{major}}

|

||||

type=sha

|

||||

|

||||

- name: Log in to AliYun Docker Hub

|

||||

uses: docker/login-action@v3

|

||||

@@ -126,6 +137,7 @@ jobs:

|

||||

type=ref,event=branch

|

||||

type=ref,event=pr

|

||||

type=semver,pattern={{version}}

|

||||

type=semver,pattern=v{{version}}

|

||||

type=semver,pattern={{major}}.{{minor}}

|

||||

type=semver,pattern={{major}}

|

||||

type=sha

|

||||

|

||||

@@ -1,139 +0,0 @@

|

||||

# Copyright © 2023 OpenIM open source community. All rights reserved.

|

||||

#

|

||||

# Licensed under the Apache License, Version 2.0 (the "License");

|

||||

# you may not use this file except in compliance with the License.

|

||||

# You may obtain a copy of the License at

|

||||

#

|

||||

# http://www.apache.org/licenses/LICENSE-2.0

|

||||

#

|

||||

# Unless required by applicable law or agreed to in writing, software

|

||||

# distributed under the License is distributed on an "AS IS" BASIS,

|

||||

# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

|

||||

# See the License for the specific language governing permissions and

|

||||

# limitations under the License.

|

||||

|

||||

name: Build OpenIM Web Docker image

|

||||

|

||||

on:

|

||||

schedule:

|

||||

- cron: '30 3 * * *'

|

||||

push:

|

||||

branches:

|

||||

- main

|

||||

- release-*

|

||||

tags:

|

||||

- v*

|

||||

workflow_dispatch:

|

||||

|

||||

env:

|

||||

# Common versions

|

||||

GO_VERSION: "1.20"

|

||||

|

||||

jobs:

|

||||

build-openim-web-dockerhub:

|

||||

runs-on: ubuntu-latest

|

||||

steps:

|

||||

- name: Checkout

|

||||

uses: actions/checkout@v4

|

||||

- name: Set up QEMU

|

||||

uses: docker/setup-qemu-action@v3

|

||||

- name: Set up Docker Buildx

|

||||

uses: docker/setup-buildx-action@v3

|

||||

|

||||

# docker.io/openim/openim-web:latest

|

||||

- name: Extract metadata (tags, labels) for Docker

|

||||

id: meta

|

||||

uses: docker/metadata-action@v5.0.0

|

||||

with:

|

||||

images: openim/openim-web

|

||||

# generate Docker tags based on the following events/attributes

|

||||

tags: |

|

||||

type=schedule

|

||||

type=ref,event=branch

|

||||

type=ref,event=pr

|

||||

type=semver,pattern={{version}}

|

||||

type=semver,pattern={{major}}.{{minor}}

|

||||

type=semver,pattern={{major}}

|

||||

type=sha

|

||||

|

||||

- name: Log in to Docker Hub

|

||||

uses: docker/login-action@v3

|

||||

with:

|

||||

username: ${{ secrets.DOCKER_USERNAME }}

|

||||

password: ${{ secrets.DOCKER_PASSWORD }}

|

||||

|

||||

- name: Build and push Docker image

|

||||

uses: docker/build-push-action@v5

|

||||

with:

|

||||

context: .

|

||||

file: ./build/images/openim-tools/openim-web/Dockerfile

|

||||

platforms: linux/amd64,linux/arm64

|

||||

push: ${{ github.event_name != 'pull_request' }}

|

||||

tags: ${{ steps.meta.outputs.tags }}

|

||||

labels: ${{ steps.meta.outputs.labels }}

|

||||

|

||||

build-openim-web-aliyun:

|

||||

runs-on: ubuntu-latest

|

||||

steps:

|

||||

- name: Checkout

|

||||

uses: actions/checkout@v4

|

||||

- name: Set up QEMU

|

||||

uses: docker/setup-qemu-action@v3

|

||||

- name: Set up Docker Buildx

|

||||

uses: docker/setup-buildx-action@v3

|

||||

# registry.cn-hangzhou.aliyuncs.com/openimsdk/openim-web:latest

|

||||

- name: Extract metadata (tags, labels) for Docker

|

||||

id: meta2

|

||||

uses: docker/metadata-action@v5.0.0

|

||||

with:

|

||||

images: registry.cn-hangzhou.aliyuncs.com/openimsdk/openim-web

|

||||

|

||||

- name: Log in to AliYun Docker Hub

|

||||

uses: docker/login-action@v3

|

||||

with:

|

||||

registry: registry.cn-hangzhou.aliyuncs.com

|

||||

username: ${{ secrets.ALIREGISTRY_USERNAME }}

|

||||

password: ${{ secrets.ALIREGISTRY_TOKEN }}

|

||||

|

||||

- name: Build and push Docker image

|

||||

uses: docker/build-push-action@v5

|

||||

with:

|

||||

context: .

|

||||

file: ./build/images/openim-tools/openim-web/Dockerfile

|

||||

platforms: linux/amd64,linux/arm64

|

||||

push: ${{ github.event_name != 'pull_request' }}

|

||||

tags: ${{ steps.meta2.outputs.tags }}

|

||||

labels: ${{ steps.meta2.outputs.labels }}

|

||||

|

||||

build-openim-web-ghcr:

|

||||

runs-on: ubuntu-latest

|

||||

steps:

|

||||

- name: Checkout

|

||||

uses: actions/checkout@v4

|

||||

- name: Set up QEMU

|

||||

uses: docker/setup-qemu-action@v3

|

||||

- name: Set up Docker Buildx

|

||||

uses: docker/setup-buildx-action@v3

|

||||

# ghcr.io/openimsdk/openim-web:latest

|

||||

- name: Extract metadata (tags, labels) for Docker

|

||||

id: meta2

|

||||

uses: docker/metadata-action@v5.0.0

|

||||

with:

|

||||

images: ghcr.io/openimsdk/openim-web

|

||||

|

||||

- name: Log in to GitHub Container Registry

|

||||

uses: docker/login-action@v3

|

||||

with:

|

||||

registry: ghcr.io

|

||||

username: ${{ github.repository_owner }}

|

||||

password: ${{ secrets.GITHUB_TOKEN }}

|

||||

|

||||

- name: Build and push Docker image

|

||||

uses: docker/build-push-action@v5

|

||||

with:

|

||||

context: .

|

||||

file: ./build/images/openim-tools/openim-web/Dockerfile

|

||||

platforms: linux/amd64,linux/arm64

|

||||

push: ${{ github.event_name != 'pull_request' }}

|

||||

tags: ${{ steps.meta2.outputs.tags }}

|

||||

labels: ${{ steps.meta2.outputs.labels }}

|

||||

@@ -22,6 +22,9 @@ on:

|

||||

# run e2e test every 4 hours

|

||||

- cron: 0 */4 * * *

|

||||

|

||||

env:

|

||||

CALLBACK_ENABLE: true

|

||||

|

||||

jobs:

|

||||

build:

|

||||

name: Test

|

||||

@@ -70,9 +73,9 @@ jobs:

|

||||

|

||||

- name: Docker Operations

|

||||

run: |

|

||||

curl -o docker-compose.yml https://raw.githubusercontent.com/OpenIMSDK/openim-docker/main/example/basic-openim-server-dependency.yml

|

||||

sudo make init

|

||||

sudo docker compose up -d

|

||||

sudo sleep 60

|

||||

sudo sleep 20

|

||||

|

||||

- name: Module Operations

|

||||

run: |

|

||||

@@ -97,4 +100,15 @@ jobs:

|

||||

|

||||

- name: Exec OpenIM System uninstall

|

||||

run: |

|

||||

sudo ./scripts/install/install.sh -u

|

||||

sudo ./scripts/install/install.sh -u

|

||||

|

||||

- name: gobenchdata publish

|

||||

uses: bobheadxi/gobenchdata@v1

|

||||

with:

|

||||

PRUNE_COUNT: 30

|

||||

GO_TEST_FLAGS: -cpu 1,2

|

||||

PUBLISH: true

|

||||

PUBLISH_BRANCH: gh-pages

|

||||

env:

|

||||

GITHUB_TOKEN: ${{ secrets.BOT_GITHUB_TOKEN }}

|

||||

continue-on-error: true

|

||||

@@ -41,7 +41,7 @@ jobs:

|

||||

# ./*.md all markdown files in the root directory

|

||||

args: --verbose -E -i --no-progress --exclude-path './CHANGELOG' './**/*.md'

|

||||

env:

|

||||

GITHUB_TOKEN: ${{secrets.GH_PAT}}

|

||||

GITHUB_TOKEN: ${{secrets.BOT_GITHUB_TOKEN}}

|

||||

|

||||

- name: Create Issue From File

|

||||

if: env.lychee_exit_code != 0

|

||||

|

||||

@@ -51,4 +51,5 @@ jobs:

|

||||

OCO_EMOJI: false

|

||||

OCO_MODEL: gpt-3.5-turbo-16k

|

||||

OCO_LANGUAGE: en

|

||||

OCO_PROMPT_MODULE: conventional-commit

|

||||

OCO_PROMPT_MODULE: conventional-commit

|

||||

continue-on-error: true

|

||||

@@ -156,9 +156,9 @@ jobs:

|

||||

|

||||

- name: Docker Operations

|

||||

run: |

|

||||

curl -o docker-compose.yml https://raw.githubusercontent.com/OpenIMSDK/openim-docker/main/example/basic-openim-server-dependency.yml

|

||||

sudo make init

|

||||

sudo docker compose up -d

|

||||

sudo sleep 60

|

||||

sudo sleep 20

|

||||

|

||||

- name: Module Operations

|

||||

run: |

|

||||

@@ -195,4 +195,5 @@ jobs:

|

||||

|

||||

- name: Test Docker Build

|

||||

run: |

|

||||

sudo make init

|

||||

sudo make image

|

||||

@@ -391,3 +391,5 @@ Sessionx.vim

|

||||

dist/

|

||||

.env

|

||||

config/config.yaml

|

||||

config/alertmanager.yml

|

||||

config/prometheus.yml

|

||||

+3

-2

@@ -25,7 +25,8 @@ WORKDIR ${SERVER_WORKDIR}

|

||||

|

||||

# Copy scripts and binary files to the production image

|

||||

COPY --from=builder ${OPENIM_SERVER_BINDIR} /openim/openim-server/_output/bin

|

||||

# COPY --from=builder ${OPENIM_SERVER_CMDDIR} /openim/openim-server/scripts

|

||||

# COPY --from=builder ${SERVER_WORKDIR}/config /openim/openim-server/config

|

||||

COPY --from=builder ${OPENIM_SERVER_CMDDIR} /openim/openim-server/scripts

|

||||

COPY --from=builder ${SERVER_WORKDIR}/config /openim/openim-server/config

|

||||

COPY --from=builder ${SERVER_WORKDIR}/deployments /openim/openim-server/deployments

|

||||

|

||||

CMD ["/openim/openim-server/scripts/docker-start-all.sh"]

|

||||

|

||||

+1

-1

@@ -30,7 +30,7 @@

|

||||

</p>

|

||||

|

||||

## 🟢 扫描微信进群交流

|

||||

<img src="https://openim-1253691595.cos.ap-nanjing.myqcloud.com/WechatIMG20.jpeg" width="300">

|

||||

<img src="./docs/images/Wechat.jpg" width="300">

|

||||

|

||||

|

||||

## Ⓜ️ 关于 OpenIM

|

||||

|

||||

@@ -231,7 +231,7 @@ Before you start, please make sure your changes are in demand. The best for that

|

||||

- [OpenIM Makefile Utilities](https://github.com/openimsdk/open-im-server/tree/main/docs/contrib/util-makefile.md)

|

||||

- [OpenIM Script Utilities](https://github.com/openimsdk/open-im-server/tree/main/docs/contrib/util-scripts.md)

|

||||

- [OpenIM Versioning](https://github.com/openimsdk/open-im-server/tree/main/docs/contrib/version.md)

|

||||

|

||||

- [Manage backend and monitor deployment](https://github.com/openimsdk/open-im-server/tree/main/docs/contrib/prometheus-grafana.md)

|

||||

|

||||

## :busts_in_silhouette: Community

|

||||

|

||||

|

||||

+30

-13

@@ -18,11 +18,13 @@ import (

|

||||

"context"

|

||||

"fmt"

|

||||

"net"

|

||||

"net/http"

|

||||

_ "net/http/pprof"

|

||||

"os"

|

||||

"os/signal"

|

||||

"strconv"

|

||||

|

||||

ginProm "github.com/openimsdk/open-im-server/v3/pkg/common/ginprometheus"

|

||||

"github.com/openimsdk/open-im-server/v3/pkg/common/prommetrics"

|

||||

"syscall"

|

||||

"time"

|

||||

|

||||

"github.com/OpenIMSDK/protocol/constant"

|

||||

"github.com/OpenIMSDK/tools/discoveryregistry"

|

||||

@@ -33,6 +35,8 @@ import (

|

||||

"github.com/openimsdk/open-im-server/v3/pkg/common/config"

|

||||

"github.com/openimsdk/open-im-server/v3/pkg/common/db/cache"

|

||||

kdisc "github.com/openimsdk/open-im-server/v3/pkg/common/discoveryregister"

|

||||

ginProm "github.com/openimsdk/open-im-server/v3/pkg/common/ginprometheus"

|

||||

"github.com/openimsdk/open-im-server/v3/pkg/common/prommetrics"

|

||||

)

|

||||

|

||||

func main() {

|

||||

@@ -51,13 +55,12 @@ func run(port int, proPort int) error {

|

||||

if port == 0 || proPort == 0 {

|

||||

err := "port or proPort is empty:" + strconv.Itoa(port) + "," + strconv.Itoa(proPort)

|

||||

log.ZError(context.Background(), err, nil)

|

||||

|

||||

return fmt.Errorf(err)

|

||||

}

|

||||

|

||||

rdb, err := cache.NewRedis()

|

||||

if err != nil {

|

||||

log.ZError(context.Background(), "Failed to initialize Redis", err)

|

||||

|

||||

return err

|

||||

}

|

||||

log.ZInfo(context.Background(), "api start init discov client")

|

||||

@@ -68,30 +71,29 @@ func run(port int, proPort int) error {

|

||||

client, err = kdisc.NewDiscoveryRegister(config.Config.Envs.Discovery)

|

||||

if err != nil {

|

||||

log.ZError(context.Background(), "Failed to initialize discovery register", err)

|

||||

|

||||

return err

|

||||

}

|

||||

|

||||

if err = client.CreateRpcRootNodes(config.Config.GetServiceNames()); err != nil {

|

||||

log.ZError(context.Background(), "Failed to create RPC root nodes", err)

|

||||

|

||||

return err

|

||||

}

|

||||

|

||||

log.ZInfo(context.Background(), "api register public config to discov")

|

||||

if err = client.RegisterConf2Registry(constant.OpenIMCommonConfigKey, config.Config.EncodeConfig()); err != nil {

|

||||

log.ZError(context.Background(), "Failed to register public config to discov", err)

|

||||

|

||||

return err

|

||||

}

|

||||

|

||||

log.ZInfo(context.Background(), "api register public config to discov success")

|

||||

router := api.NewGinRouter(client, rdb)

|

||||

//////////////////////////////

|

||||

if config.Config.Prometheus.Enable {

|

||||

p := ginProm.NewPrometheus("app", prommetrics.GetGinCusMetrics("Api"))

|

||||

p.SetListenAddress(fmt.Sprintf(":%d", proPort))

|

||||

p.Use(router)

|

||||

}

|

||||

/////////////////////////////////

|

||||

log.ZInfo(context.Background(), "api init router success")

|

||||

|

||||

var address string

|

||||

if config.Config.Api.ListenIP != "" {

|

||||

address = net.JoinHostPort(config.Config.Api.ListenIP, strconv.Itoa(port))

|

||||

@@ -100,10 +102,25 @@ func run(port int, proPort int) error {

|

||||

}

|

||||

log.ZInfo(context.Background(), "start api server", "address", address, "OpenIM version", config.Version)

|

||||

|

||||

err = router.Run(address)

|

||||

if err != nil {

|

||||

log.ZError(context.Background(), "api run failed", err, "address", address)

|

||||

server := http.Server{Addr: address, Handler: router}

|

||||

go func() {

|

||||

err = server.ListenAndServe()

|

||||

if err != nil && err != http.ErrServerClosed {

|

||||

log.ZError(context.Background(), "api run failed", err, "address", address)

|

||||

os.Exit(1)

|

||||

}

|

||||

}()

|

||||

|

||||

sigs := make(chan os.Signal, 1)

|

||||

signal.Notify(sigs, syscall.SIGINT, syscall.SIGTERM, syscall.SIGQUIT)

|

||||

<-sigs

|

||||

|

||||

ctx, cancel := context.WithTimeout(context.Background(), 15*time.Second)

|

||||

defer cancel()

|

||||

|

||||

// graceful shutdown operation.

|

||||

if err := server.Shutdown(ctx); err != nil {

|

||||

log.ZError(context.Background(), "failed to api-server shutdown", err)

|

||||

return err

|

||||

}

|

||||

|

||||

|

||||

@@ -23,6 +23,7 @@ func main() {

|

||||

msgGatewayCmd.AddWsPortFlag()

|

||||

msgGatewayCmd.AddPortFlag()

|

||||

msgGatewayCmd.AddPrometheusPortFlag()

|

||||

|

||||

if err := msgGatewayCmd.Exec(); err != nil {

|

||||

panic(err.Error())

|

||||

}

|

||||

|

||||

@@ -0,0 +1,16 @@

|

||||

{{ define "email.to.html" }}

|

||||

{{ range .Alerts }}

|

||||

<!-- Begin of OpenIM Alert -->

|

||||

<div style="border:1px solid #ccc; padding:10px; margin-bottom:10px;">

|

||||

<h3>OpenIM Alert</h3>

|

||||

<p><strong>Alert Program:</strong> Prometheus Alert</p>

|

||||

<p><strong>Severity Level:</strong> {{ .Labels.severity }}</p>

|

||||

<p><strong>Alert Type:</strong> {{ .Labels.alertname }}</p>

|

||||

<p><strong>Affected Host:</strong> {{ .Labels.instance }}</p>

|

||||

<p><strong>Affected Service:</strong> {{ .Labels.job }}</p>

|

||||

<p><strong>Alert Subject:</strong> {{ .Annotations.summary }}</p>

|

||||

<p><strong>Trigger Time:</strong> {{ .StartsAt.Format "2006-01-02 15:04:05" }}</p>

|

||||

</div>

|

||||

<!-- End of OpenIM Alert -->

|

||||

{{ end }}

|

||||

{{ end }}

|

||||

@@ -0,0 +1,22 @@

|

||||

groups:

|

||||

- name: instance_down

|

||||

rules:

|

||||

- alert: InstanceDown

|

||||

expr: up == 0

|

||||

for: 1m

|

||||

labels:

|

||||

severity: critical

|

||||

annotations:

|

||||

summary: "Instance {{ $labels.instance }} down"

|

||||

description: "{{ $labels.instance }} of job {{ $labels.job }} has been down for more than 1 minutes."

|

||||

|

||||

- name: database_insert_failure_alerts

|

||||

rules:

|

||||

- alert: DatabaseInsertFailed

|

||||

expr: (increase(msg_insert_redis_failed_total[5m]) > 0) or (increase(msg_insert_mongo_failed_total[5m]) > 0)

|

||||

for: 1m

|

||||

labels:

|

||||

severity: critical

|

||||

annotations:

|

||||

summary: "Increase in MsgInsertRedisFailedCounter or MsgInsertMongoFailedCounter detected"

|

||||

description: "Either MsgInsertRedisFailedCounter or MsgInsertMongoFailedCounter has increased in the last 5 minutes, indicating failures in message insert operations to Redis or MongoDB,maybe the redis or mongodb is crash."

|

||||

File diff suppressed because it is too large

Load Diff

@@ -0,0 +1,36 @@

|

||||

# Examples Directory

|

||||

|

||||

Welcome to the `examples` directory of our project! This directory contains a collection of example files that demonstrate various configurations and setups for our software. These examples are designed to provide you with templates that can be used as a starting point for your own configurations.

|

||||

|

||||

## Overview

|

||||

|

||||

In this directory, you'll find examples for a variety of use cases. Each file is a template with default values and configurations that illustrate best practices and typical scenarios. Whether you're just getting started or looking to implement a complex setup, these examples should help you get on the right track.

|

||||

|

||||

## Structure

|

||||

|

||||

Here's a quick overview of what you'll find in this directory:

|

||||

|

||||

+ `env-example.yaml`: Demonstrates how to set up environment variables.

|

||||

+ `openim-example.yaml`: A sample configuration file for the OpenIM application.

|

||||

+ `prometheus-example.yml`: An example Prometheus configuration for monitoring.

|

||||

+ `alertmanager-example.yml`: A template for setting up Alertmanager configurations.

|

||||

|

||||

## How to Use These Examples

|

||||

|

||||

To use these examples, simply copy the relevant file to your working directory and rename it as needed (e.g., removing the `-example` suffix). Then, modify the file according to your requirements.

|

||||

|

||||

### Tips for Using Example Files:

|

||||

|

||||

1. **Read the Comments**: Each file contains comments that explain various sections and settings. Make sure to read these comments for a better understanding of how to customize the file.

|

||||

2. **Check for Required Changes**: Some examples might require mandatory changes (like setting specific environment variables) before they can be used effectively.

|

||||

3. **Version Compatibility**: Ensure that the example files are compatible with the version of the software you are using.

|

||||

|

||||

## Contributing

|

||||

|

||||

If you have a configuration that you believe would be beneficial to others, please feel free to contribute by opening a pull request with your proposed changes. We appreciate contributions that expand our examples with new scenarios and use cases.

|

||||

|

||||

## Support

|

||||

|

||||

If you encounter any issues or have questions regarding the example files, please open an issue on our repository. Our community is here to help you navigate through any challenges you might face.

|

||||

|

||||

Thank you for exploring our examples, and we hope they will be helpful in setting up and configuring your environment!

|

||||

@@ -0,0 +1,33 @@

|

||||

###################### AlertManager Configuration ######################

|

||||

# AlertManager configuration using environment variables

|

||||

#

|

||||

# Resolve timeout

|

||||

# SMTP configuration for sending alerts

|

||||

# Templates for email notifications

|

||||

# Routing configurations for alerts

|

||||

# Receiver configurations

|

||||

global:

|

||||

resolve_timeout: 5m

|

||||

smtp_from: alert@openim.io

|

||||

smtp_smarthost: smtp.163.com:465

|

||||

smtp_auth_username: alert@openim.io

|

||||

smtp_auth_password: YOURAUTHPASSWORD

|

||||

smtp_require_tls: false

|

||||

smtp_hello: xxx监控告警

|

||||

|

||||

templates:

|

||||

- /etc/alertmanager/email.tmpl

|

||||

|

||||

route:

|

||||

group_by: ['alertname']

|

||||

group_wait: 5s

|

||||

group_interval: 5s

|

||||

repeat_interval: 5m

|

||||

receiver: email

|

||||

receivers:

|

||||

- name: email

|

||||

email_configs:

|

||||

- to: 'alert@example.com'

|

||||

html: '{{ template "email.to.html" . }}'

|

||||

headers: { Subject: "[OPENIM-SERVER]Alarm" }

|

||||

send_resolved: true

|

||||

@@ -35,26 +35,6 @@ zookeeper:

|

||||

username: ''

|

||||

password: ''

|

||||

|

||||

###################### Mysql ######################

|

||||

# MySQL configuration

|

||||

# Currently, only single machine setup is supported

|

||||

#

|

||||

# Maximum number of open connections

|

||||

# Maximum number of idle connections

|

||||

# Maximum lifetime in seconds a connection can be reused

|

||||

# Log level: 1=slient, 2=error, 3=warn, 4=info

|

||||

# Slow query threshold in milliseconds

|

||||

mysql:

|

||||

address: [ 172.28.0.1:13306 ]

|

||||

username: root

|

||||

password: openIM123

|

||||

database: openIM_v3

|

||||

maxOpenConn: 1000

|

||||

maxIdleConn: 100

|

||||

maxLifeTime: 60

|

||||

logLevel: 4

|

||||

slowThreshold: 500

|

||||

|

||||

###################### Mongo ######################

|

||||

# MongoDB configuration

|

||||

# If uri is not empty, it will be used directly

|

||||

@@ -135,14 +115,14 @@ api:

|

||||

# minio.signEndpoint is minio public network address

|

||||

object:

|

||||

enable: "minio"

|

||||

apiURL: "http://127.0.0.1:10002"

|

||||

apiURL: "http://172.28.0.1:10002"

|

||||

minio:

|

||||

bucket: "openim"

|

||||

endpoint: "http://172.28.0.1:10005"

|

||||

accessKeyID: "root"

|

||||

secretAccessKey: "openIM123"

|

||||

sessionToken: ''

|

||||

signEndpoint: "http://127.0.0.1:10005"

|

||||

signEndpoint: "http://172.28.0.1:10005"

|

||||

publicRead: false

|

||||

cos:

|

||||

bucketURL: https://temp-1252357374.cos.ap-chengdu.myqcloud.com

|

||||

@@ -158,13 +138,21 @@ object:

|

||||

accessKeySecret: ''

|

||||

sessionToken: ''

|

||||

publicRead: false

|

||||

kodo:

|

||||

endpoint: "http://s3.cn-east-1.qiniucs.com"

|

||||

bucket: "demo-9999999"

|

||||

bucketURL: "http://your.domain.com"

|

||||

accessKeyID: ''

|

||||

accessKeySecret: ''

|

||||

sessionToken: ''

|

||||

publicRead: false

|

||||

|

||||

###################### RPC Port Configuration ######################

|

||||

# RPC service ports

|

||||

# These ports are passed into the program by the script and are not recommended to modify

|

||||

# For launching multiple programs, just fill in multiple ports separated by commas

|

||||

# For example, [10110, 10111]

|

||||

rpcPort:

|

||||

rpcPort:

|

||||

openImUserPort: [ 10110 ]

|

||||

openImFriendPort: [ 10120 ]

|

||||

openImMessagePort: [ 10130 ]

|

||||

@@ -198,7 +186,7 @@ rpcRegisterName:

|

||||

# Whether to output in json format

|

||||

# Whether to include stack trace in logs

|

||||

log:

|

||||

storageLocation: ./logs/

|

||||

storageLocation: ../logs/

|

||||

rotationTime: 24

|

||||

remainRotationCount: 2

|

||||

remainLogLevel: 6

|

||||

@@ -312,7 +300,7 @@ iosPush:

|

||||

# Timeout in seconds

|

||||

# Whether to continue execution if callback fails

|

||||

callback:

|

||||

url:

|

||||

url: ""

|

||||

beforeSendSingleMsg:

|

||||

enable: false

|

||||

timeout: 5

|

||||

@@ -320,6 +308,7 @@ callback:

|

||||

afterSendSingleMsg:

|

||||

enable: false

|

||||

timeout: 5

|

||||

failedContinue: true

|

||||

beforeSendGroupMsg:

|

||||

enable: false

|

||||

timeout: 5

|

||||

@@ -327,6 +316,7 @@ callback:

|

||||

afterSendGroupMsg:

|

||||

enable: false

|

||||

timeout: 5

|

||||

failedContinue: true

|

||||

msgModify:

|

||||

enable: false

|

||||

timeout: 5

|

||||

@@ -334,12 +324,15 @@ callback:

|

||||

userOnline:

|

||||

enable: false

|

||||

timeout: 5

|

||||

failedContinue: true

|

||||

userOffline:

|

||||

enable: false

|

||||

timeout: 5

|

||||

failedContinue: true

|

||||

userKickOff:

|

||||

enable: false

|

||||

timeout: 5

|

||||

failedContinue: true

|

||||

offlinePush:

|

||||

enable: false

|

||||

timeout: 5

|

||||

@@ -364,6 +357,10 @@ callback:

|

||||

enable: false

|

||||

timeout: 5

|

||||

failedContinue: true

|

||||

afterCreateGroup:

|

||||

enable: false

|

||||

timeout: 5

|

||||

failedContinue: true

|

||||

beforeMemberJoinGroup:

|

||||

enable: false

|

||||

timeout: 5

|

||||

@@ -372,18 +369,129 @@ callback:

|

||||

enable: false

|

||||

timeout: 5

|

||||

failedContinue: true

|

||||

afterSetGroupMemberInfo:

|

||||

enable: false

|

||||

timeout: 5

|

||||

failedContinue: true

|

||||

setMessageReactionExtensions:

|

||||

enable: false

|

||||

timeout: 5

|

||||

failedContinue: true

|

||||

|

||||

quitGroup:

|

||||

enable: false

|

||||

timeout: 5

|

||||

failedContinue: true

|

||||

killGroupMember:

|

||||

enable: false

|

||||

timeout: 5

|

||||

failedContinue: true

|

||||

dismissGroup:

|

||||

enable: false

|

||||

timeout: 5

|

||||

failedContinue: true

|

||||

joinGroup:

|

||||

enable: false

|

||||

timeout: 5

|

||||

failedContinue: true

|

||||

groupMsgRead:

|

||||

enable: false

|

||||

timeout: 5

|

||||

failedContinue: true

|

||||

singleMsgRead:

|

||||

enable: false

|

||||

timeout: 5

|

||||

failedContinue: true

|

||||

updateUserInfo:

|

||||

enable: false

|

||||

timeout: 5

|

||||

failedContinue: true

|

||||

beforeUserRegister:

|

||||

enable: false

|

||||

timeout: 5

|

||||

failedContinue: true

|

||||

afterUserRegister:

|

||||

enable: false

|

||||

timeout: 5

|

||||

failedContinue: true

|

||||

transferGroupOwner:

|

||||

enable: false

|

||||

timeout: 5

|

||||

failedContinue: true

|

||||

beforeSetFriendRemark:

|

||||

enable: false

|

||||

timeout: 5

|

||||

failedContinue: true

|

||||

afterSetFriendRemark:

|

||||

enable: false

|

||||

timeout: 5

|

||||

failedContinue: true

|

||||

afterGroupMsgRead:

|

||||

enable: false

|

||||

timeout: 5

|

||||

failedContinue: true

|

||||

afterGroupMsgRevoke:

|

||||

enable: false

|

||||

timeout: 5

|

||||

failedContinue: true

|

||||

afterJoinGroup:

|

||||

enable: false

|

||||

timeout: 5

|

||||

failedContinue: true

|

||||

beforeInviteUserToGroup:

|

||||

enable: false

|

||||

timeout: 5

|

||||

failedContinue: true

|

||||

joinGroupAfter:

|

||||

enable: false

|

||||

timeout: 5

|

||||

failedContinue: true

|

||||

setGroupInfoAfter:

|

||||

enable: false

|

||||

timeout: 5

|

||||

failedContinue: true

|

||||

setGroupInfoBefore:

|

||||

enable: false

|

||||

timeout: 5

|

||||

failedContinue: true

|

||||

revokeMsgAfter:

|

||||

enable: false

|

||||

timeout: 5

|

||||

failedContinue: true

|

||||

addBlackBefore:

|

||||

enable: false

|

||||

timeout: 5

|

||||

failedContinue: true

|

||||

addFriendAfter:

|

||||

enable: false

|

||||

timeout: 5

|

||||

failedContinue: true

|

||||

addFriendAgreeBefore:

|

||||

enable: false

|

||||

timeout: 5

|

||||

failedContinue: true

|

||||

deleteFriendAfter:

|

||||

enable: false

|

||||

timeout: 5

|

||||

failedContinue: true

|

||||

importFriendsBefore:

|

||||

enable: false

|

||||

timeout: 5

|

||||

failedContinue: true

|

||||

importFriendsAfter:

|

||||

enable: false

|

||||

timeout: 5

|

||||

failedContinue: true

|

||||

removeBlackAfter:

|

||||

enable: false

|

||||

timeout: 5

|

||||

failedContinue: true

|

||||

###################### Prometheus ######################

|

||||

# Prometheus configuration for various services

|

||||

# The number of Prometheus ports per service needs to correspond to rpcPort

|

||||

# The number of ports needs to be consistent with msg_transfer_service_num in script/path_info.sh

|

||||

prometheus:

|

||||

enable: true

|

||||

prometheusUrl: "https://openim.prometheus"

|

||||

enable: false

|

||||

prometheusUrl: 172.28.0.1:13000

|

||||

apiPrometheusPort: [20100]

|

||||

userPrometheusPort: [ 20110 ]

|

||||

friendPrometheusPort: [ 20120 ]

|

||||

@@ -1,23 +1,9 @@

|

||||

# Copyright © 2023 OpenIM. All rights reserved.

|

||||

#

|

||||

# Licensed under the Apache License, Version 2.0 (the "License");

|

||||

# you may not use this file except in compliance with the License.

|

||||

# You may obtain a copy of the License at

|

||||

#

|

||||

# http://www.apache.org/licenses/LICENSE-2.0

|

||||

#

|

||||

# Unless required by applicable law or agreed to in writing, software

|

||||

# distributed under the License is distributed on an "AS IS" BASIS,

|

||||

# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

|

||||

# See the License for the specific language governing permissions and

|

||||

# limitations under the License.

|

||||

|

||||

# ======================================

|

||||

# ========= Basic Configuration ========

|

||||

# ======================================

|

||||

|

||||

# The user for authentication or system operations.

|

||||

# Default: USER=root

|

||||

# Default: OPENIM_USER=root

|

||||

USER=root

|

||||

|

||||

# Password associated with the specified user for authentication.

|

||||

@@ -29,8 +15,8 @@ PASSWORD=openIM123

|

||||

MINIO_ENDPOINT=http://172.28.0.1:10005

|

||||

|

||||

# Base URL for the application programming interface (API).

|

||||

# Default: API_URL=http://172.0.0.1:10002

|

||||

API_URL=http://172.0.0.1:10002

|

||||

# Default: API_URL=http://172.28.0.1:10002

|

||||

API_URL=http://172.28.0.1:10002

|

||||

|

||||

# Directory path for storing data files or related information.

|

||||

# Default: DATA_DIR=./

|

||||

@@ -55,50 +41,19 @@ DOCKER_BRIDGE_SUBNET=172.28.0.0/16

|

||||

# Default: DOCKER_BRIDGE_GATEWAY=172.28.0.1

|

||||

DOCKER_BRIDGE_GATEWAY=172.28.0.1

|

||||

|

||||

# Address or hostname for the MySQL network.

|

||||

# Default: MYSQL_NETWORK_ADDRESS=172.28.0.2

|

||||

MYSQL_NETWORK_ADDRESS=172.28.0.2

|

||||

|

||||

# Address or hostname for the MongoDB network.

|

||||

# Default: MONGO_NETWORK_ADDRESS=172.28.0.3

|

||||

MONGO_NETWORK_ADDRESS=172.28.0.3

|

||||

|

||||

# Address or hostname for the Redis network.

|

||||

# Default: REDIS_NETWORK_ADDRESS=172.28.0.4

|

||||

REDIS_NETWORK_ADDRESS=172.28.0.4

|

||||

|

||||

# Address or hostname for the Kafka network.

|

||||

# Default: KAFKA_NETWORK_ADDRESS=172.28.0.5

|

||||

KAFKA_NETWORK_ADDRESS=172.28.0.5

|

||||

|

||||

# Address or hostname for the ZooKeeper network.

|

||||

# Default: ZOOKEEPER_NETWORK_ADDRESS=172.28.0.6

|

||||

ZOOKEEPER_NETWORK_ADDRESS=172.28.0.6

|

||||

|

||||

# Address or hostname for the MinIO network.

|

||||

# Default: MINIO_NETWORK_ADDRESS=172.28.0.7

|

||||

MINIO_NETWORK_ADDRESS=172.28.0.7

|

||||

|

||||

# Address or hostname for the OpenIM web network.

|

||||

# Default: OPENIM_WEB_NETWORK_ADDRESS=172.28.0.8

|

||||

OPENIM_WEB_NETWORK_ADDRESS=172.28.0.8

|

||||

|

||||

# Address or hostname for the OpenIM server network.

|

||||

# Default: OPENIM_SERVER_NETWORK_ADDRESS=172.28.0.9

|

||||

OPENIM_SERVER_NETWORK_ADDRESS=172.28.0.9

|

||||

|

||||

# Address or hostname for the OpenIM chat network.

|

||||

# Default: OPENIM_CHAT_NETWORK_ADDRESS=172.28.0.10

|

||||

OPENIM_CHAT_NETWORK_ADDRESS=172.28.0.10

|

||||

|

||||

# Address or hostname for the Prometheus network.

|

||||

# Default: PROMETHEUS_NETWORK_ADDRESS=172.28.0.11

|

||||

PROMETHEUS_NETWORK_ADDRESS=172.28.0.11

|

||||

|

||||

# Address or hostname for the Grafana network.

|

||||

# Default: GRAFANA_NETWORK_ADDRESS=172.28.0.12

|

||||

GRAFANA_NETWORK_ADDRESS=172.28.0.12

|

||||

|

||||

MONGO_NETWORK_ADDRESS=172.28.0.2

|

||||

REDIS_NETWORK_ADDRESS=172.28.0.3

|

||||

KAFKA_NETWORK_ADDRESS=172.28.0.4

|

||||

ZOOKEEPER_NETWORK_ADDRESS=172.28.0.5

|

||||

MINIO_NETWORK_ADDRESS=172.28.0.6

|

||||

OPENIM_WEB_NETWORK_ADDRESS=172.28.0.7

|

||||

OPENIM_SERVER_NETWORK_ADDRESS=172.28.0.8

|

||||

OPENIM_CHAT_NETWORK_ADDRESS=172.28.0.9

|

||||

PROMETHEUS_NETWORK_ADDRESS=172.28.0.10

|

||||

GRAFANA_NETWORK_ADDRESS=172.28.0.11

|

||||

NODE_EXPORTER_NETWORK_ADDRESS=172.28.0.12

|

||||

OPENIM_ADMIN_FRONT_NETWORK_ADDRESS=172.28.0.13

|

||||

ALERT_MANAGER_NETWORK_ADDRESS=172.28.0.14

|

||||

|

||||

# ===============================================

|

||||

# = Component Extension Configuration =

|

||||

@@ -108,38 +63,24 @@ GRAFANA_NETWORK_ADDRESS=172.28.0.12

|

||||

# ----- ZooKeeper Configuration -----

|

||||

# Address or hostname for the ZooKeeper service.

|

||||

# Default: ZOOKEEPER_ADDRESS=172.28.0.1

|

||||

ZOOKEEPER_ADDRESS=172.28.0.6

|

||||

ZOOKEEPER_ADDRESS=172.28.0.5

|

||||

|

||||

# Port for ZooKeeper service.

|

||||

# Default: ZOOKEEPER_PORT=12181

|

||||

ZOOKEEPER_PORT=12181

|

||||

|

||||

# ----- MySQL Configuration -----

|

||||

|

||||

# Address or hostname for the MySQL service.

|

||||

# Default: MYSQL_ADDRESS=172.28.0.1

|

||||

MYSQL_ADDRESS=172.28.0.2

|

||||

|

||||

# Port on which MySQL database service is running.

|

||||

# Default: MYSQL_PORT=13306

|

||||

MYSQL_PORT=13306

|

||||

|

||||

# Password to authenticate with the MySQL database service.

|

||||

# Default: MYSQL_PASSWORD=openIM123

|

||||

MYSQL_PASSWORD=openIM123

|

||||

|

||||

# ----- MongoDB Configuration -----

|

||||

# Address or hostname for the MongoDB service.

|

||||

# Default: MONGO_ADDRESS=172.28.0.1

|

||||

MONGO_ADDRESS=172.28.0.3

|

||||

MONGO_ADDRESS=172.28.0.2

|

||||

|

||||

# Port on which MongoDB service is running.

|

||||

# Default: MONGO_PORT=37017

|

||||

MONGO_PORT=37017

|

||||

# MONGO_PORT=37017

|

||||

|

||||

# Username to authenticate with the MongoDB service.

|

||||

# Default: MONGO_USERNAME=root

|

||||

MONGO_USERNAME=root

|

||||

# MONGO_USERNAME=root

|

||||

|

||||

# Password to authenticate with the MongoDB service.

|

||||

# Default: MONGO_PASSWORD=openIM123

|

||||

@@ -152,7 +93,7 @@ MONGO_DATABASE=openIM_v3

|

||||

# ----- Redis Configuration -----

|

||||

# Address or hostname for the Redis service.

|

||||

# Default: REDIS_ADDRESS=172.28.0.1

|

||||

REDIS_ADDRESS=172.28.0.4

|

||||

REDIS_ADDRESS=172.28.0.3

|

||||

|

||||

# Port on which Redis in-memory data structure store is running.

|

||||

# Default: REDIS_PORT=16379

|

||||

@@ -165,7 +106,10 @@ REDIS_PASSWORD=openIM123

|

||||

# ----- Kafka Configuration -----

|

||||

# Address or hostname for the Kafka service.

|

||||

# Default: KAFKA_ADDRESS=172.28.0.1

|

||||

KAFKA_ADDRESS=172.28.0.5

|

||||

KAFKA_ADDRESS=172.28.0.4

|

||||

|

||||

# Kakfa username to authenticate with the Kafka service.

|

||||

# KAFKA_USERNAME=''

|

||||

|

||||

# Port on which Kafka distributed streaming platform is running.

|

||||

# Default: KAFKA_PORT=19092

|

||||

@@ -186,7 +130,7 @@ KAFKA_OFFLINEMSG_MONGO_TOPIC=offlineMsgToMongoMysql

|

||||

# ----- MinIO Configuration ----

|

||||

# Address or hostname for the MinIO object storage service.

|

||||

# Default: MINIO_ADDRESS=172.28.0.1

|

||||

MINIO_ADDRESS=172.28.0.7

|

||||

MINIO_ADDRESS=172.28.0.6

|

||||

|

||||

# Port on which MinIO object storage service is running.

|

||||

# Default: MINIO_PORT=10005

|

||||

@@ -194,7 +138,7 @@ MINIO_PORT=10005

|

||||

|

||||

# Access key to authenticate with the MinIO service.

|

||||

# Default: MINIO_ACCESS_KEY=root

|

||||

MINIO_ACCESS_KEY=root

|

||||

# MINIO_ACCESS_KEY=root

|

||||

|

||||

# Secret key corresponding to the access key for MinIO authentication.

|

||||

# Default: MINIO_SECRET_KEY=openIM123

|

||||

@@ -203,7 +147,7 @@ MINIO_SECRET_KEY=openIM123

|

||||

# ----- Prometheus Configuration -----

|

||||

# Address or hostname for the Prometheus service.

|

||||

# Default: PROMETHEUS_ADDRESS=172.28.0.1

|

||||

PROMETHEUS_ADDRESS=172.28.0.11

|

||||

PROMETHEUS_ADDRESS=172.28.0.10

|

||||

|

||||

# Port on which Prometheus service is running.

|

||||

# Default: PROMETHEUS_PORT=19090

|

||||

@@ -212,11 +156,11 @@ PROMETHEUS_PORT=19090

|

||||

# ----- Grafana Configuration -----

|

||||

# Address or hostname for the Grafana service.

|

||||

# Default: GRAFANA_ADDRESS=172.28.0.1

|

||||

GRAFANA_ADDRESS=172.28.0.12

|

||||

GRAFANA_ADDRESS=172.28.0.11

|

||||

|

||||

# Port on which Grafana service is running.

|

||||

# Default: GRAFANA_PORT=3000

|

||||

GRAFANA_PORT=3000

|

||||

# Default: GRAFANA_PORT=13000

|

||||

GRAFANA_PORT=13000

|

||||

|

||||

# ======================================

|

||||

# ============ OpenIM Web ===============

|

||||

@@ -232,7 +176,7 @@ OPENIM_WEB_PORT=11001

|

||||

|

||||

# Address or hostname for the OpenIM web service.

|

||||

# Default: OPENIM_WEB_ADDRESS=172.28.0.1

|

||||

OPENIM_WEB_ADDRESS=172.28.0.8

|

||||

OPENIM_WEB_ADDRESS=172.28.0.7

|

||||

|

||||

# ======================================

|

||||

# ========= OpenIM Server ==============

|

||||

@@ -240,7 +184,7 @@ OPENIM_WEB_ADDRESS=172.28.0.8

|

||||

|

||||

# Address or hostname for the OpenIM server.

|

||||

# Default: OPENIM_SERVER_ADDRESS=172.28.0.1

|

||||

OPENIM_SERVER_ADDRESS=172.28.0.9

|

||||

OPENIM_SERVER_ADDRESS=172.28.0.8

|

||||

|

||||

# Port for the OpenIM WebSockets.

|

||||

# Default: OPENIM_WS_PORT=10001

|

||||

@@ -261,7 +205,7 @@ CHAT_BRANCH=main

|

||||

|

||||

# Address or hostname for the OpenIM chat service.

|

||||

# Default: OPENIM_CHAT_ADDRESS=172.28.0.1

|

||||

OPENIM_CHAT_ADDRESS=172.28.0.10

|

||||

OPENIM_CHAT_ADDRESS=172.28.0.9

|

||||

|

||||

# Port for the OpenIM chat API.

|

||||

# Default: OPENIM_CHAT_API_PORT=10008

|

||||

@@ -283,3 +227,23 @@ SERVER_BRANCH=main

|

||||

# Port for the OpenIM admin API.

|

||||

# Default: OPENIM_ADMIN_API_PORT=10009

|

||||

OPENIM_ADMIN_API_PORT=10009

|

||||

|

||||

# Port for the node exporter.

|

||||

# Default: NODE_EXPORTER_PORT=19100

|

||||

NODE_EXPORTER_PORT=19100

|

||||

|

||||

# Port for the prometheus.

|

||||

# Default: PROMETHEUS_PORT=19090

|

||||

PROMETHEUS_PORT=19090

|

||||

|

||||

# Port for the grafana.

|

||||

# Default: GRAFANA_PORT=13000

|

||||

GRAFANA_PORT=13000

|

||||

|

||||

# Port for the admin front.

|

||||

# Default: OPENIM_ADMIN_FRONT_PORT=11002

|

||||

OPENIM_ADMIN_FRONT_PORT=11002

|

||||

|

||||

# Port for the alertmanager.

|

||||

# Default: ALERT_MANAGER_PORT=19093

|

||||

ALERT_MANAGER_PORT=19093

|

||||

@@ -0,0 +1,85 @@

|

||||

# my global config

|

||||

global:

|

||||

scrape_interval: 15s # Set the scrape interval to every 15 seconds. Default is every 1 minute.

|

||||

evaluation_interval: 15s # Evaluate rules every 15 seconds. The default is every 1 minute.

|

||||

# scrape_timeout is set to the global default (10s).

|

||||

|

||||

# Alertmanager configuration

|

||||

alerting:

|

||||

alertmanagers:

|

||||

- static_configs:

|

||||

- targets: ['172.28.0.1:19093']

|

||||

|

||||

# Load rules once and periodically evaluate them according to the global 'evaluation_interval'.

|

||||

rule_files:

|

||||

- "instance-down-rules.yml"

|

||||

# - "first_rules.yml"

|

||||

# - "second_rules.yml"

|

||||

|

||||

# A scrape configuration containing exactly one endpoint to scrape:

|

||||

# Here it's Prometheus itself.

|

||||

scrape_configs:

|

||||

# The job name is added as a label "job='job_name'"" to any timeseries scraped from this config.

|

||||

# Monitored information captured by prometheus

|

||||

- job_name: 'node-exporter'

|

||||

static_configs:

|

||||

- targets: [ '172.28.0.1:19100' ]

|

||||

labels:

|

||||

namespace: 'default'

|

||||

|

||||

# prometheus fetches application services

|

||||

- job_name: 'openimserver-openim-api'

|

||||

static_configs:

|

||||

- targets: [ '172.28.0.1:20100' ]

|

||||

labels:

|

||||

namespace: 'default'

|

||||

- job_name: 'openimserver-openim-msggateway'

|

||||

static_configs:

|

||||

- targets: [ '172.28.0.1:20140' ]

|

||||

labels:

|

||||

namespace: 'default'

|

||||

- job_name: 'openimserver-openim-msgtransfer'

|

||||

static_configs:

|

||||

- targets: [ 172.28.0.1:21400, 172.28.0.1:21401, 172.28.0.1:21402, 172.28.0.1:21403 ]

|

||||

labels:

|

||||

namespace: 'default'

|

||||

- job_name: 'openimserver-openim-push'

|

||||

static_configs:

|

||||

- targets: [ '172.28.0.1:20170' ]

|

||||

labels:

|

||||

namespace: 'default'

|

||||

- job_name: 'openimserver-openim-rpc-auth'

|

||||

static_configs:

|

||||

- targets: [ '172.28.0.1:20160' ]

|

||||

labels:

|

||||

namespace: 'default'

|

||||

- job_name: 'openimserver-openim-rpc-conversation'

|

||||

static_configs:

|

||||

- targets: [ '172.28.0.1:20230' ]

|

||||

labels:

|

||||

namespace: 'default'

|

||||

- job_name: 'openimserver-openim-rpc-friend'

|

||||

static_configs:

|

||||

- targets: [ '172.28.0.1:20120' ]

|

||||

labels:

|

||||

namespace: 'default'

|

||||

- job_name: 'openimserver-openim-rpc-group'

|

||||

static_configs:

|

||||

- targets: [ '172.28.0.1:20150' ]

|

||||

labels:

|

||||

namespace: 'default'

|

||||

- job_name: 'openimserver-openim-rpc-msg'

|

||||

static_configs:

|

||||

- targets: [ '172.28.0.1:20130' ]

|

||||

labels:

|

||||

namespace: 'default'

|

||||

- job_name: 'openimserver-openim-rpc-third'

|

||||

static_configs:

|

||||

- targets: [ '172.28.0.1:21301' ]

|

||||

labels:

|

||||

namespace: 'default'

|

||||

- job_name: 'openimserver-openim-rpc-user'

|

||||

static_configs:

|

||||

- targets: [ '172.28.0.1:20110' ]

|

||||

labels:

|

||||

namespace: 'default'

|

||||

+27

-5

@@ -84,8 +84,8 @@ $ sudo sealos run labring/kubernetes:v1.25.0 labring/helm:v3.8.2 labring/calico:

|

||||

If you are local, you can also use Kind and Minikube to test, for example, using Kind:

|

||||

|

||||

```bash

|

||||

$ sGO111MODULE="on" go get sigs.k8s.io/kind@v0.11.1

|

||||

$ skind create cluster

|

||||

$ GO111MODULE="on" go get sigs.k8s.io/kind@v0.11.1

|

||||

$ kind create cluster

|

||||

```

|

||||

|

||||

### Installing helm

|

||||

@@ -123,6 +123,30 @@ Explore our Helm-Charts repository and read through: [Helm-Charts Repository](ht

|

||||

|

||||

Using the helm charts repository, you can ignore the following configuration, but if you want to just use the server and scale on top of it, you can go ahead:

|

||||

|

||||

**Use the Helm template to generate the deployment yaml file: `openim-charts.yaml`**

|

||||

|

||||

**Gen Image:**

|

||||

|

||||

```bash

|

||||

../scripts/genconfig.sh ../scripts/install/environment.sh ./templates/helm-image.yaml > ./charts/generated-configs/helm-image.yaml

|

||||

```

|

||||

|

||||

**Gen Charts:**

|

||||

|

||||

```bash

|

||||

for chart in ./charts/*/; do

|

||||

if [[ "$chart" == *"generated-configs"* || "$chart" == *"helmfile.yaml"* ]]; then

|

||||

continue

|

||||

fi

|

||||

|

||||

if [ -f "${chart}values.yaml" ]; then

|

||||

helm template "$chart" -f "./charts/generated-configs/helm-image.yaml" -f "./charts/generated-configs/config.yaml" -f "./charts/generated-configs/notification.yaml" >> openim-charts.yaml

|

||||

else

|

||||

helm template "$chart" >> openim-charts.yaml

|

||||

fi

|

||||

done

|

||||

```

|

||||

|

||||

**Use Helmfile:**

|

||||

|

||||

```bash

|

||||